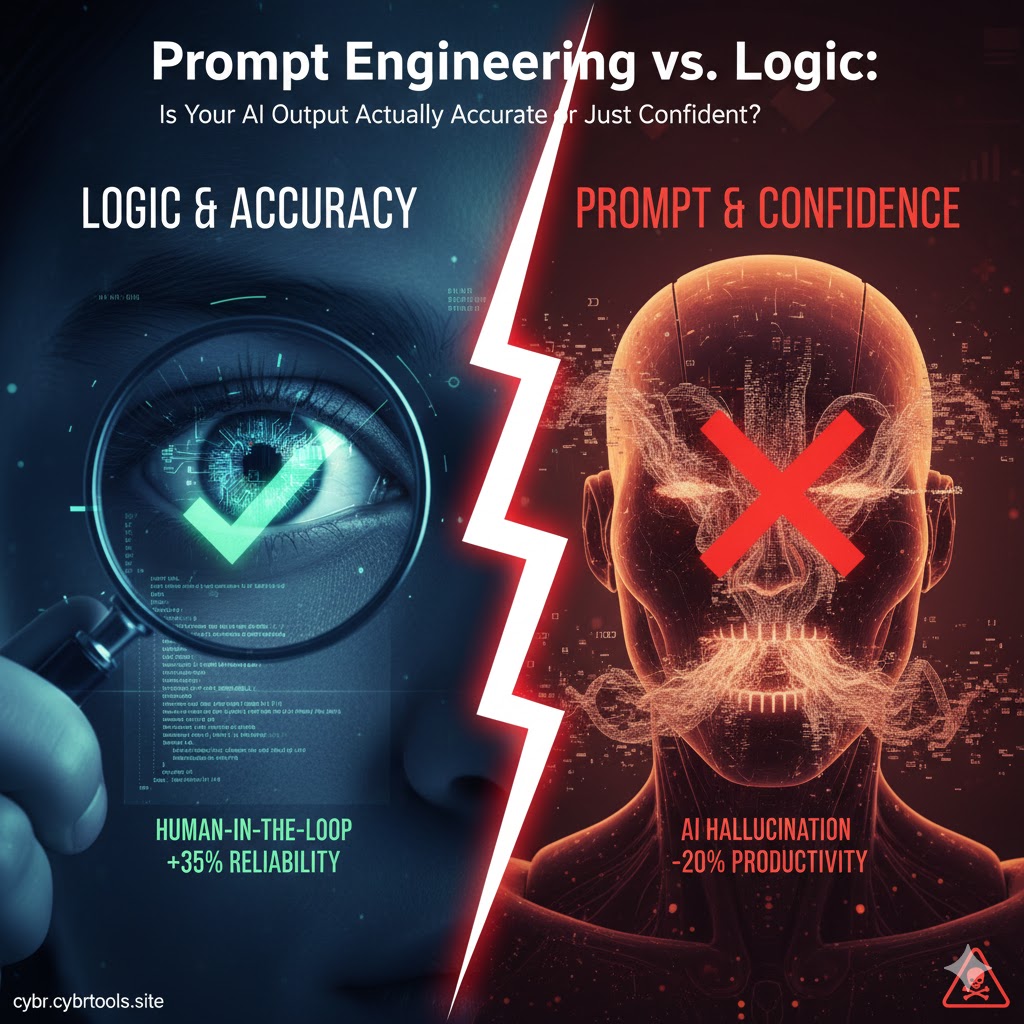

In 2026, AI has become an inseparable part of our professional lives. However, an old computing adage has never been more relevant: “GIGO—Garbage In, Garbage Out.” Today, we face a new, more dangerous challenge—The Confident Lie. When you query an AI, it responds with beautiful prose and absolute certainty, but is that answer “Logical” or just a “Statistical Guess”?

Many users mistake “Prompt Engineering” for a magic wand, when in reality, it is the intersection of Human Logic and Machine Probability. If your underlying logic is flawed, even the most sophisticated prompt will only yield a “high-end hallucination.”

The Psychology of Confident Hallucinations

“The Confidence Trap: Why AI speaks like an expert even when it’s hallucinating.”

Large Language Models (LLMs) are, at their core, “Next Token Predictors.” They aren’t programmed to find “The Truth”; they are programmed to find the “Next most likely word” that fits the pattern of your request. When you ask a technical question, the AI pulls patterns from its massive training data to construct an answer.

The crisis occurs when the AI lacks specific data but its algorithm doesn’t allow it to simply say, “I don’t know.” Instead, it generates a response that is grammatically flawless but logically bankrupt. This phenomenon is known as being a “Stochastic Parrot” the AI is speaking, but it has no conceptual understanding of what it is saying.

A Human Reflection: When AI “Lied” to My Face

“The Debugging Nightmare: How I wasted 4 hours on a code that didn’t exist.”

A few months ago, I was working on a complex API integration. I asked a leading AI model for specific library functions to handle data encryption. The AI provided a very elegant, professional-looking code snippet. I spent hours trying to implement it, but I kept receiving “Undefined” errors that made no sense.

After three hours of frustration, I checked the official documentation. It turned out the “Library” and those specific “Functions” never existed! The AI had hallucinated their names so convincingly that I didn’t think to double-check the logic. That day, I learned a vital lesson: Never let the AI’s confidence override your common sense. We often defer to AI authority the same way we believe someone just because they are wearing a suit—don’t fall for the aesthetic of expertise.

The Math of Prompt Logic: Structure > Keywords

“The Prompt Formula: Why your logic determines the ROI of your AI tools.”

Users often focus on using “power keywords” in prompts but ignore the logical flow. The accuracy of an AI output can be viewed through this simple formula:

$$Accuracy = (Quality of Logic \times Context Density) + Prompt Clarity$$

If your logic is flawed, the $Quality of Logic = 0$. This means the output will always be zero or misleading, regardless of how “professional” or long your prompt is.

Logic Building Steps:

- Chain of Thought (CoT): Explicitly tell the AI to “Think step-by-step.”

- Negative Constraints: Define what the AI should NOT do.

- Verification Loop: Ask the AI to critique its own answer for logical fallacies.

Prompt Engineering: A Skill or a Temporary Hack?

“The Skills Shift: Why 2026 belongs to the ‘Logical Thinkers,’ not just ‘Prompt Writers’.”

As we often discuss in our Security Tools section, technical accuracy is paramount. Prompt engineering is just a bridge; if the ground beneath it (your logic) is shaky, the bridge will collapse.

By 2026, AI models have become smarter at understanding “Natural Language.” You no longer need “secret hacks” to talk to AI; you need Clear Thinking. Those who lack logic in coding or cybersecurity will never be able to verify if the AI’s work is safe. It’s like owning a Ferrari but having no idea how to read a map—the speed is useless if you’re headed in the wrong direction.

Why Logic is Your Only Cybersecurity Defense

“The Human Firewall: Why AI-generated security code can be your biggest vulnerability.”

The use of AI in cybersecurity has skyrocketed. However, if you ask an AI to “Write a secure login script,” it might provide an outdated pattern that is considered unsafe in the current Digital Utilities/Cyber News landscape.

This is where your Logic becomes the “Human Firewall.” You must understand concepts like SQL Injection or Cross-Site Scripting (XSS) to audit what the AI produces. The AI is your “Junior Assistant,” not your “Senior Architect.” Relying blindly on AI is essentially leaving a backdoor open in your own system.

Final Thoughts: The Master and the Tool

“The New Hierarchy: Keeping the ‘Human’ in the loop of an AI-driven world.”

Verifying AI output is no longer an optional skill—it is a survival skill for the digital age. AI is “Confident” because it is a machine designed to please, but it is “Accurate” only when the human operator provides the logical guardrails.

The next time you receive a brilliant answer from an AI, ask yourself: “Is this actually true, or does it just sound good?” Always prioritize logic over the prompt. Computers don’t make mistakes; they simply follow your instructions—even if those instructions lead to a logical cliff.

Leave a Reply